Key Features

Observability

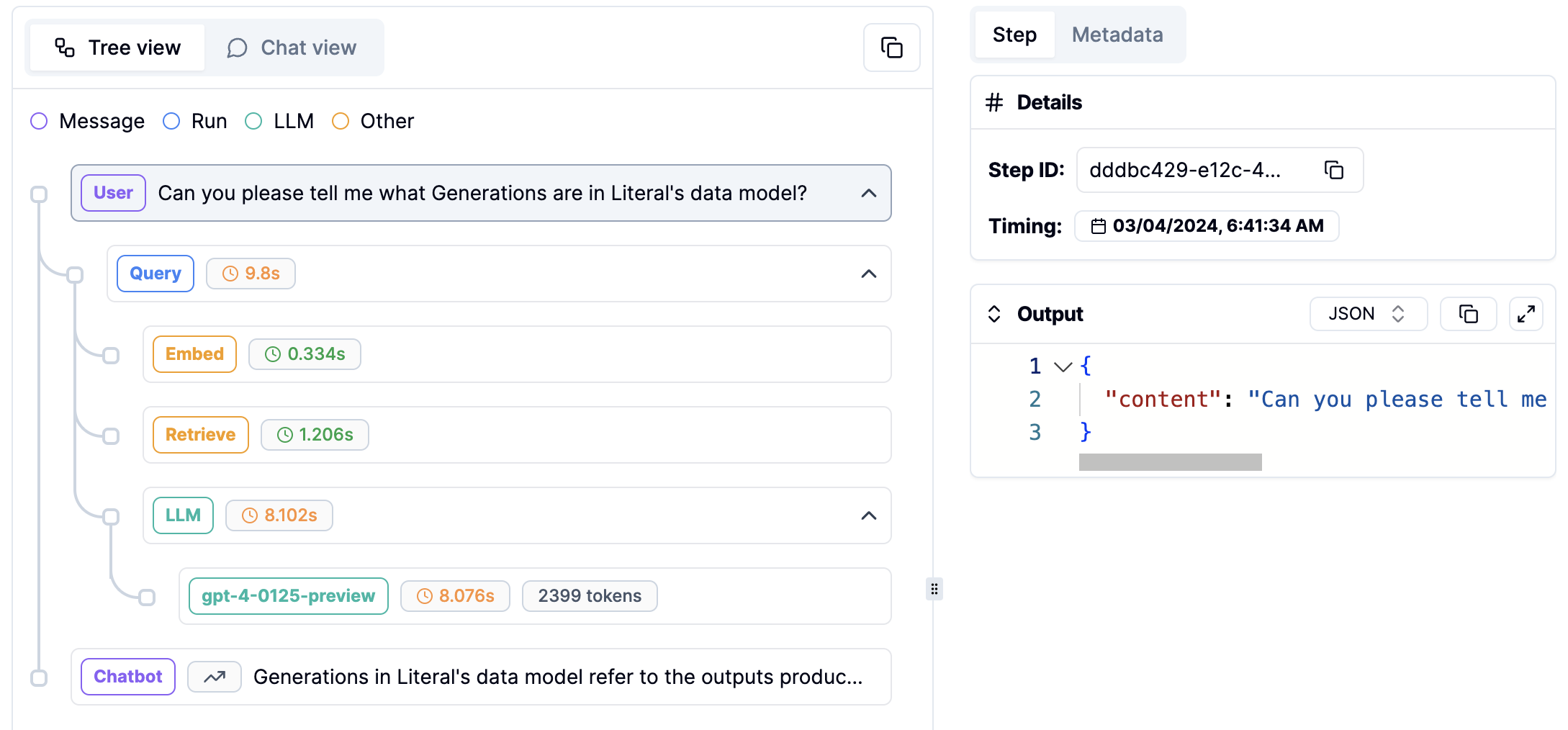

Monitor your LLM app (including steps, feedback, prompts, token consumption) in a few minutes with our SDKs. Literal provides a unified view of all your data in one place.

Dataset

Create datasets mixing production data and hand written examples to run non regression tests.

Online Evaluations

Evaluate your threads and runs in real time using off the shelf and custom evaluators.

Prompt Collaboration

Safely design, try, debug, version and deploy prompts directly from Literal.

Next up

Get Started

Install the Literal SDK and get your API key.

Learn more about integrations

Learn about OpenAI, LangChain and Chainlit with Literal.

More

Use this documentation to- Learn: Concepts, Integrations

- Find SDK references: Python, TypeScript

- Get inspired: Examples, Blog